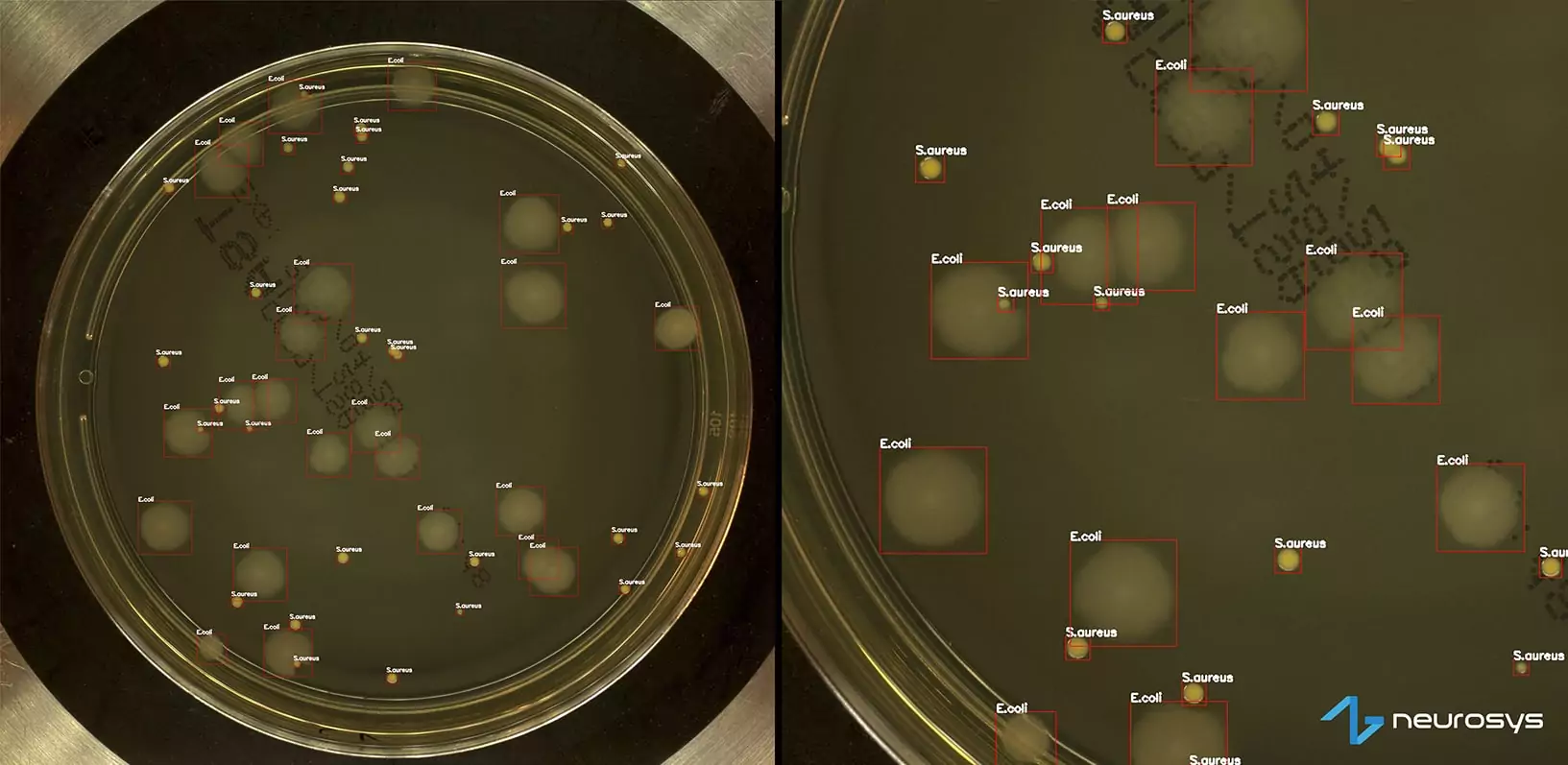

When we say we keep tabs on the latest technologies, we mean it. Especially when it comes to the research & development that strives to break new ground with artificial intelligence/machine learning solutions, every day. However, we need to feed AI/ML algorithms with data, such as photos of Petri dishes to classify bacterial colonies, so they can learn in time and become more and more precise.

To provide data on objects in professional environments, particularly in the context of our Nsflow platform, we often use devices that capture images. Thus, 3D cameras seem to be a natural forward step in the processes connected with industrial automation, for example, robot guidance, quality inspection (including true shape control and dimensioning), or predictive maintenance (based on, among others, wear assessment).

Thus, to know what’s what, in our research and development unit we have been testing a Photoneo MotionCam-3D M. Does it live up to all our expectations? What effects can you expect? Keep on reading.

What is a 3D camera?

But let us start off on the right foot – what exactly we’re talking about. A three-dimensional camera is designed to capture images that provide the perception of depth, which isn’t achievable using run-of-the-mill devices e.g. traditional cameras. Depending on the type, 3D cameras can use different technologies. For example, stereo vision cameras try to mimic human binocular vision (analogously artificial intelligence tries to mimic human behavior). There are also cameras that use infrared light to measure distance, using the information on how long the beam travels. Some types combine both cameras and infrared projectors.

Again, based on the type, 3D cameras can use multiple sensors to capture different points of view. These perspectives are then merged and converted into a single 3D image or video.

Use cases

As we’ve mentioned at the beginning, we focus on industrial use cases to improve our clients’ manufacturing (and not only) processes. Here, we identify four areas in which 3D cameras can play a crucial role, mainly providing robots with sight – while our AI algorithms grant understanding of what they see:

- Robot guidance: picking and placing/loading and unloading (irregular shapes included), packaging, and assembly – adjusting movement automatically

- Inspection: measuring (distances, angles, holes), verifying completeness, fault recognition, quality assurance

- Recognition: sorting objects based on their shape, size, and other features

- Engineering: three-dimensional model creation and reverse engineering

Why Photoneo?

The 3D camera market offers a bunch of solutions. We have decided to test Photoneo MotionCam-3D M that declares to be the world’s highest-resolution and highest-accuracy 3D camera, created to combine with machine learning solutions which are our cup of tea. MotionCam is available in five sizes, that differ in scanning range (up to 3 meters) and accuracy. It can be used in two modes – camera and scanner. It uses technology similar to stereo vision cameras – but instead of two cameras Photoneo uses a camera and a structured light projector.

Photoneo MotionCam-3D testing

Getting started

The camera can be used in two modes – camera (video for dynamic scenes) and scanner (for more precision). It uses a single Ethernet cable for both power and data transfer, so we don’t need to have an additional power cord. Usually, this power supply works in a way that either we have to use a special router that is able to power the device or we can use an PoE injector that plugs in between the camera and the computer/router that powers the device. Suffice to say, camera mounting wasn’t particularly strenuous.

Scanning the object

Photoneo MotionCam-3D can scan objects in two modes – either the camera is still and an object with markers are in motion or the other way round – camera is moving and object remains still.

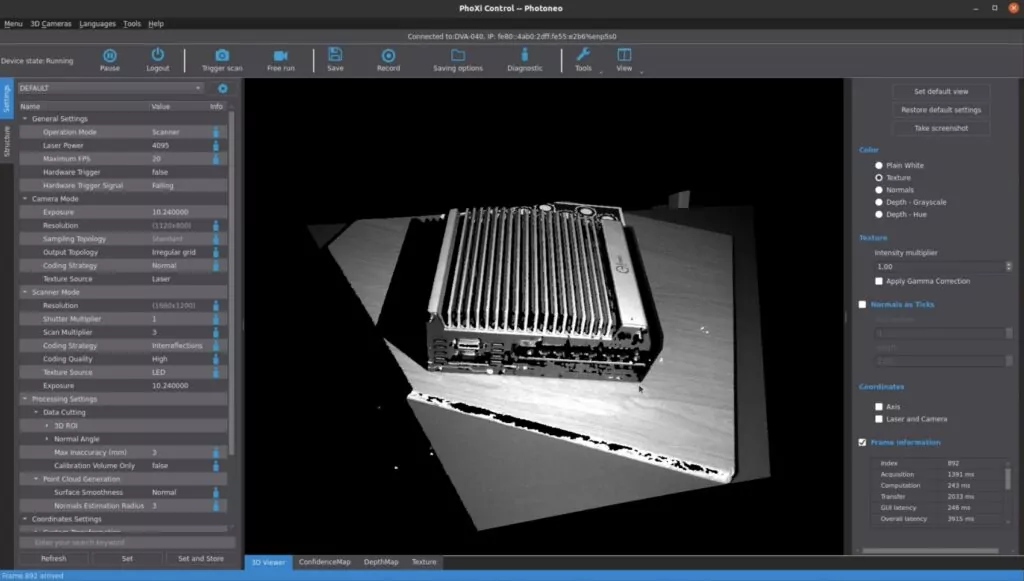

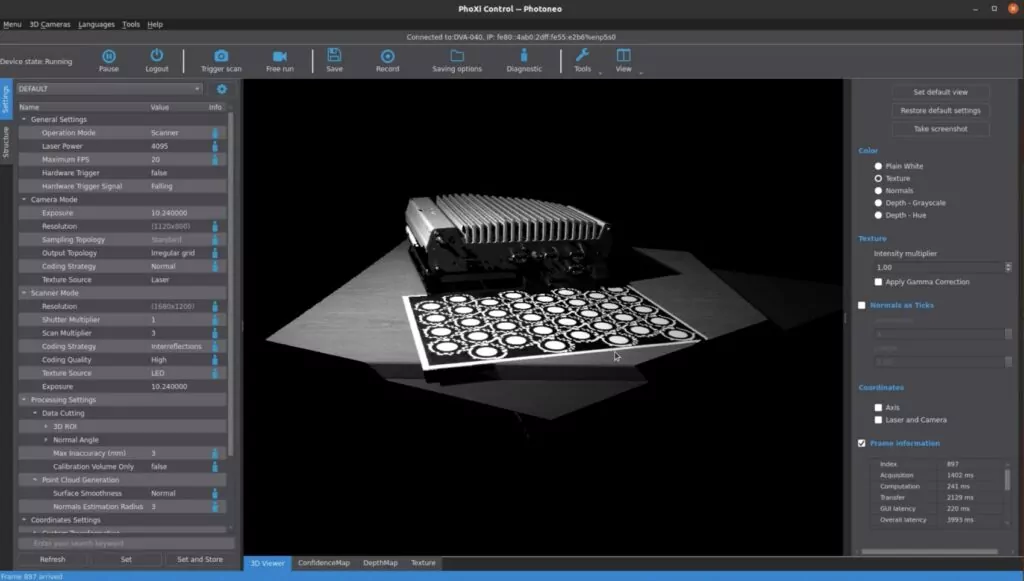

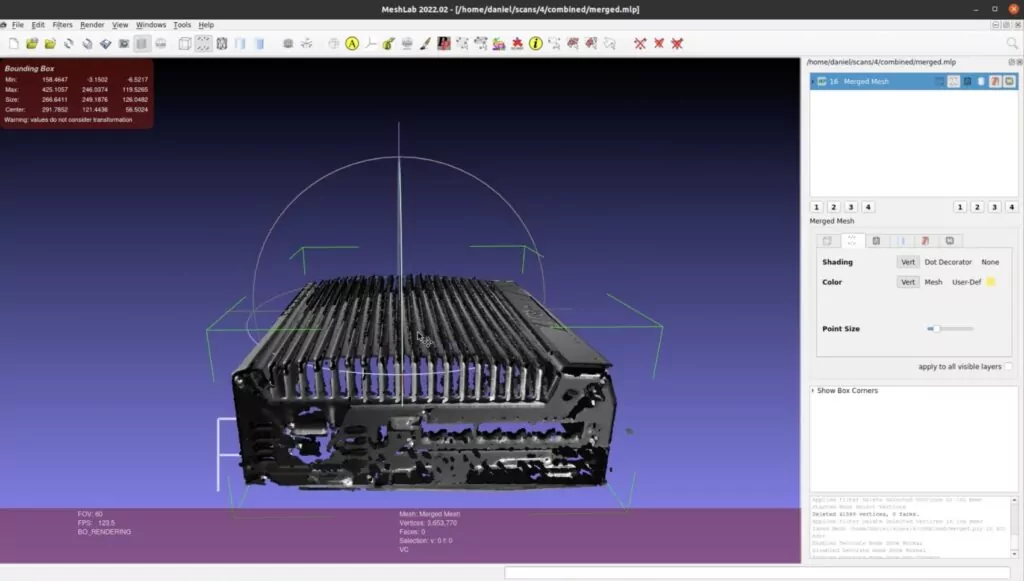

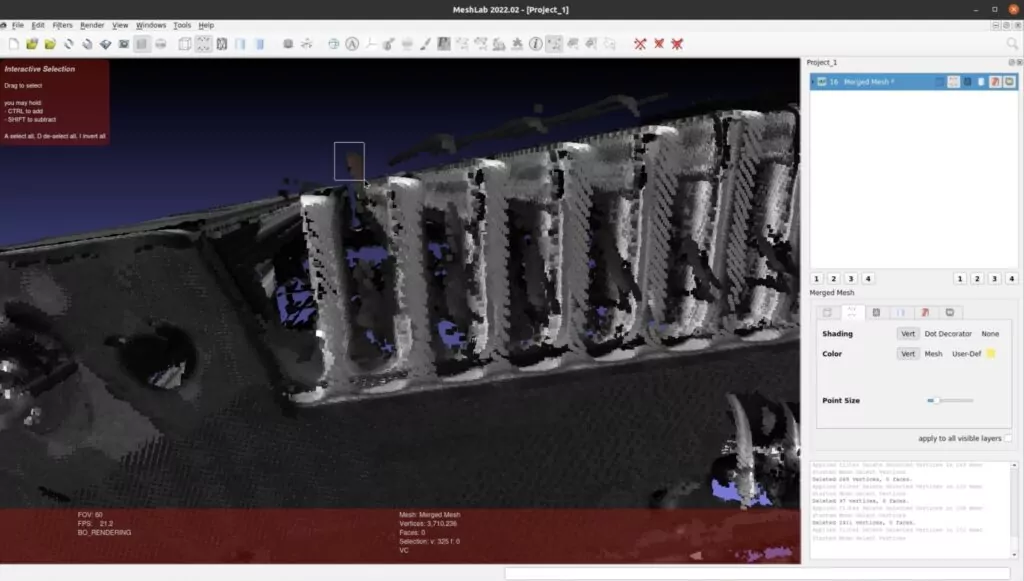

For the purpose of this article, we decided to use the device in the former mode, when the camera remains still. As an object we’ve picked an industrial PC that is a part of our Nsflow Box solution, the precise model of which might be useful for our clients to plan it in their production halls. What we observed is that scanning works best when the object is placed on a matte surface.

The system can bring together multiple measurements for the most precise scans. The output of the object scanning is delivered in the form of point clouds.

PS Are 3D scans safe?

Lasers are safe for inanimate objects however remember not to look directly at the laser source – it can severely damage your sight.

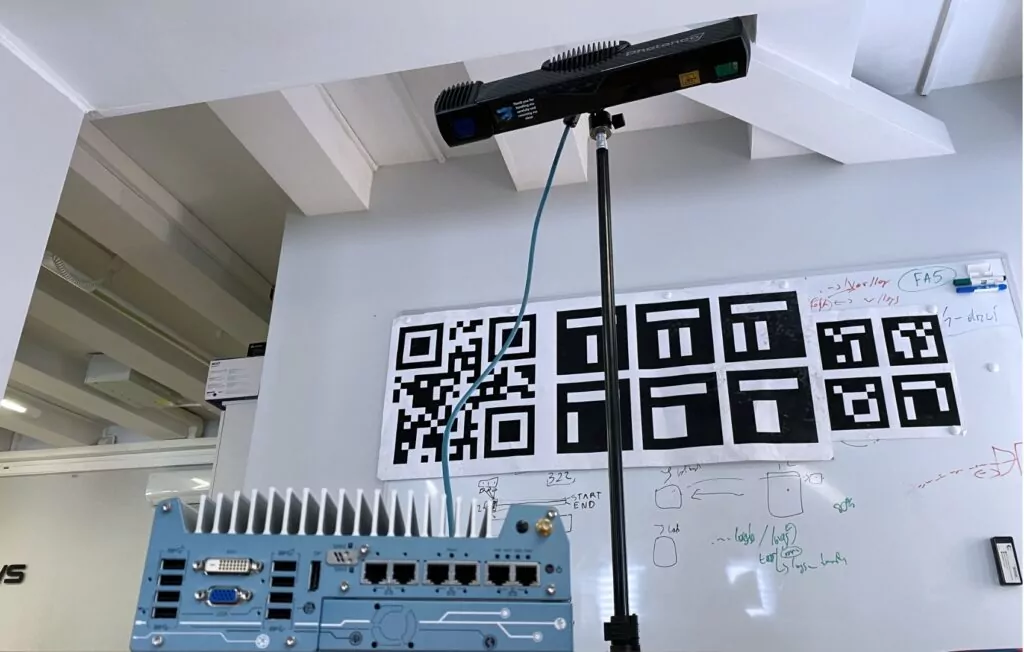

Reference points marker

For scanning, we used reference point markers delivered with the camera by its producer. They simplify the process of merging the scans. When the object is in motion, markers should remain still with respect to it to work as a reference.

Black&white

MotionCam-3D scans are black & white, there is no information on the object’s color. In the case of point clouds though, it isn’t that important. If such data will be needed, you can connect an external camera that will add the color layer to Photoneo scans. The RGB camera needs to be in a fixed position w.r.t. the scanner, and calibrated with the use of the provided markers.

June 30 update: A new thing, there’s a color version of MotionCam-3D available.

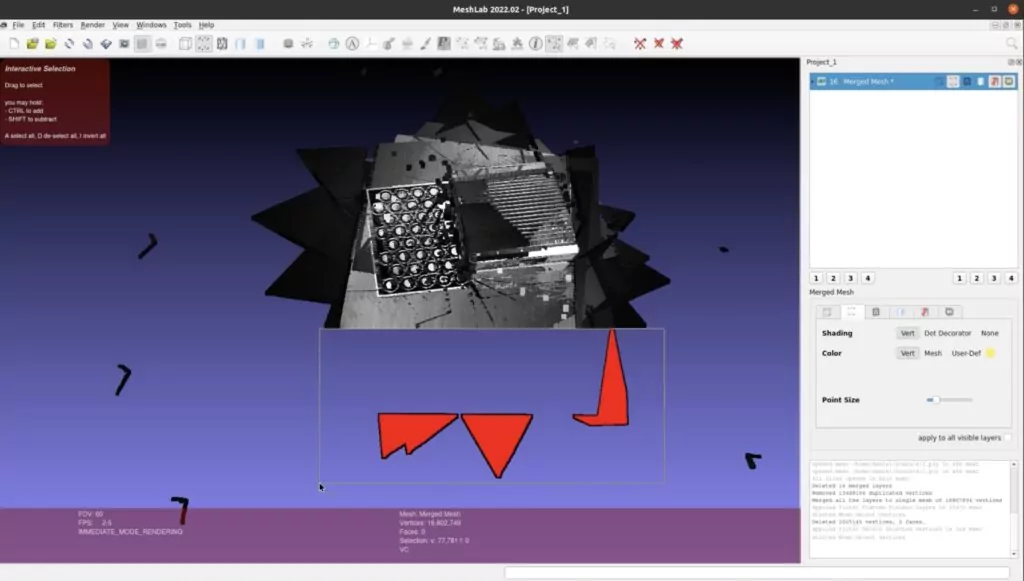

3D camera app

To collect point clouds we used Photoneo scanning software. The control panel enabled handling the device, configuring the sensor parameters, and visualizing the output at the same time. To combine the points, convert them to the model, and clean the output we used MeshLab.

The effects

We’re aware that we’ve selected a pretty demanding object to be scanned, particularly because of its ridgy top surface. The truth is that the final effect required some manual refinement/cleaning so it mapped the objects precisely. Still, as you can see in the pictures and videos provided, the digital 3D camera has scanned our object successfully and we’re satisfied with the final result.

We expect that working longer with the camera would allow us to get better and better at object positioning and scanning, fully automating the process in the end.

Now that we know how it works and what effects we can expect, we see plenty of potential ways to use 3D cameras with our Nsflow platform. That’s it for now, but we recommend you read about AI in production process optimization.