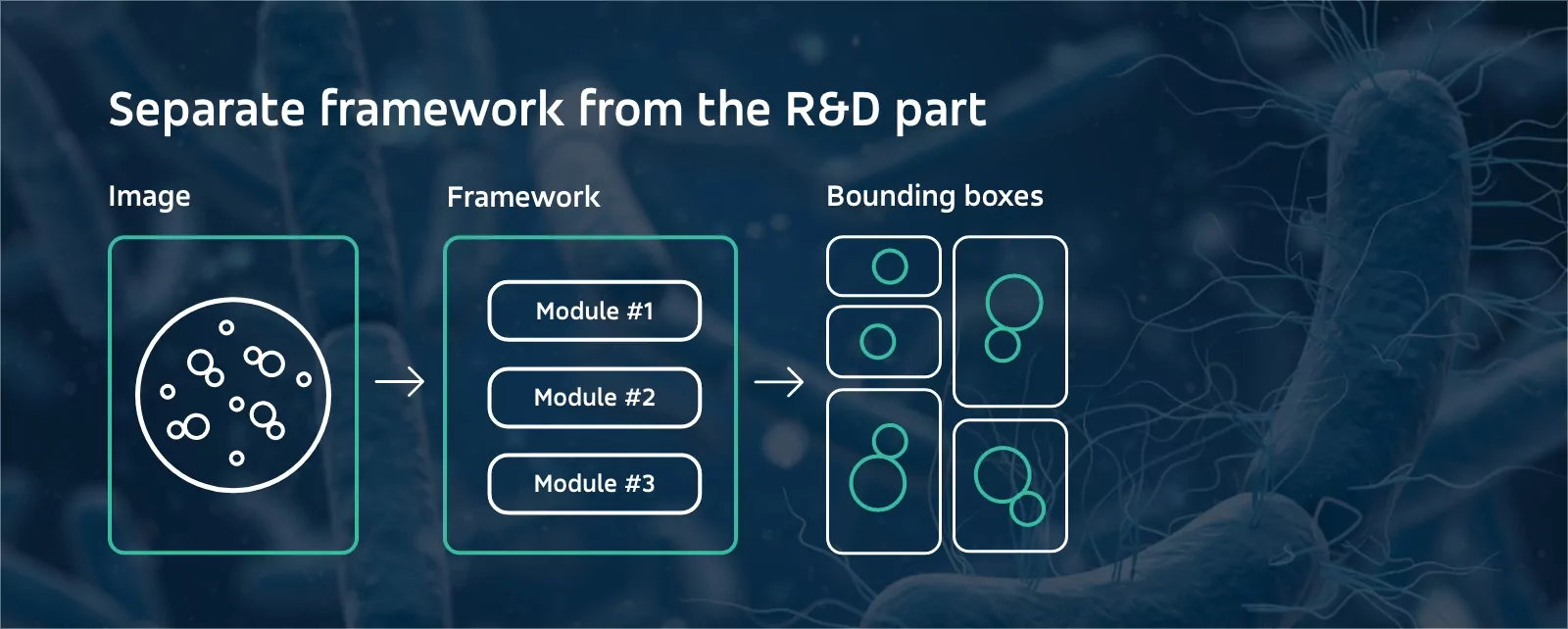

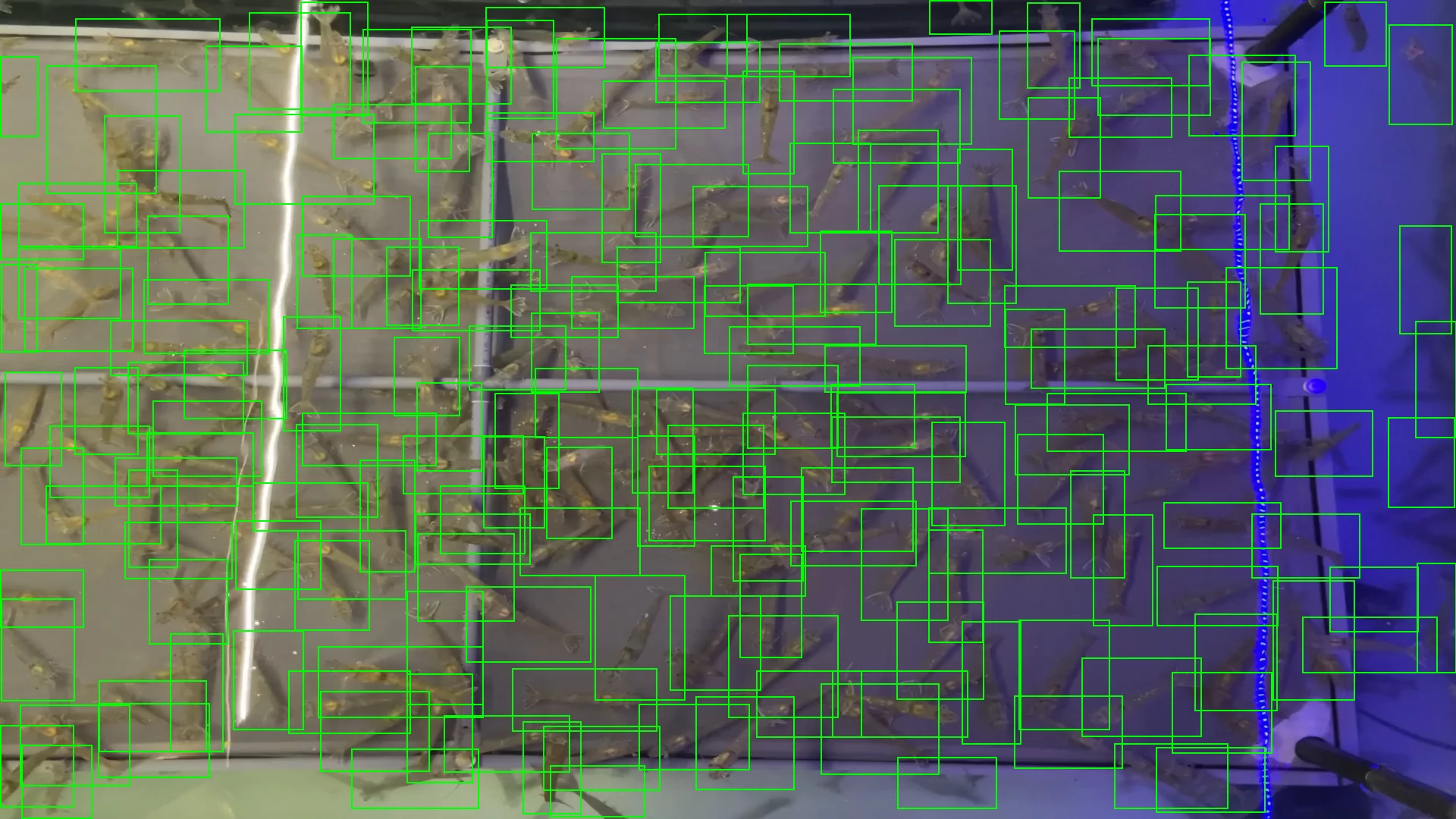

Erra is a modular, scalable solution connected to the bacteria recognition works, carried out by our Research and Development team. As a result, a solution for efficient microbial identification and counting was created.

Our R&D team has prepared modules containing neural network models, along with input image processing and output data shaping/sanitizing instructions. Each module solves a certain type of problem (1 problem per module) and can be tailored to the needs of our clients. Every problem that could be boiled down to image analysis and recognition could be solved, e.g.: bacteria recognition on Petri dishes.

The units enclosing neural networks can be created on-demand by the team to address a wide range of problems. Our execution engine carries out the modules (delivered in the form of a NuGet package or a dedicated web service), created along with a dedicated debugger, module compiler, testing tools, and a high workload stress tests environment for a top-notch QA. The modules live as separate files and could be adjusted and updated without changing the execution engine.